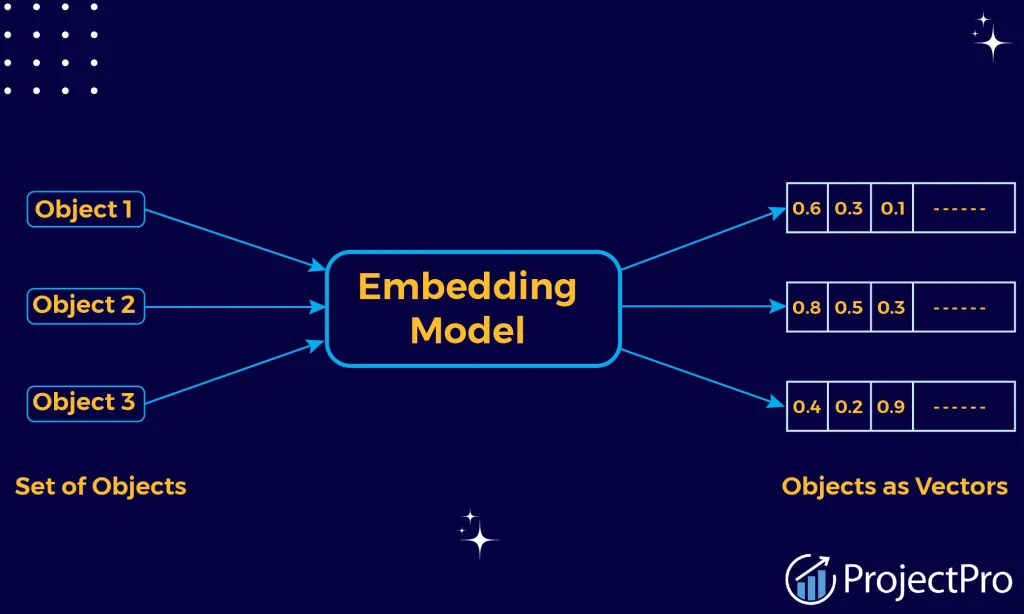

Vector embeddings are a way to convert words, sentences and other data into numbers that capture their meaning and relationships.

Embeddings are the building blocks of artificial intelligence (AI), allowing computers to comprehend the connections between words and other objects. In a technical sense, embeddings are vectors produced by machine learning models to obtain useful information about every object.

The usage of vector embeddings is one of the fundamental methods that has contributed to the advancement of machine learning, which has advanced significantly in recent years. In this blog article, we will discuss what embeddings are, how they function, and why machine learning relies heavily on them.

What Are Embeddings?

All embeddings do is automatically extract meaningful features from unprocessed data and represent them. This has numerous applications, ranging from computer vision tasks like image classification to natural language processing tasks like sentiment analysis. In essence, embeddings enable us to transform unstructured, raw data into a format better suited for machine learning algorithms.

An approach to conceptualizing embeddings could be as a form of automated feature extraction. Working with raw data frequently results in a large volume of information that is challenging for a machine-learning model to interpret. We can automatically extract (or “learn”) the most pertinent and helpful features from this data and compactly represent them by using embeddings.

These embeddings are frequently learned by machine learning, which can subsequently significantly enhance the effectiveness of other machine learning models on tasks that come after.

Vector embedding types

Vector embeddings come in a variety of forms that are frequently employed in diverse applications. Here are a few examples:

- Word embeddings use vector search of individual words. Word embeddings are learned via methods like Word2Vec, GloVe, and FastText, which extract semantic relationships and contextual information from massive text corpora.

- Sentences as vectors are represented by sentence embeddings. Models such as SkipThought and Universal Sentence Encoder (USE) produce embeddings that capture the phrases’ context and general meaning.

- Document embeddings use vectors to represent documents, which can be anything from novels to academic papers to newspaper stories. They fully capture the context and semantic data of the entire content. To learn document embeddings, methods such as Doc2Vec and Paragraph Vectors are developed.

- By capturing many visual characteristics, image embeddings represent images as vectors. For tasks like image classification, object detection, and image similarity, methods like convolutional neural networks (CNNs) and pre-trained models like ResNet and VGG are used to build picture embeddings.

- User embeddings are vector representations of users within a system or platform. They record the traits, actions, and preferences of the user. User segmentation, tailored marketing, and recommendation algorithms are just a few applications that can benefit from using user embeddings.

- In recommendation or e-commerce systems, product embeddings serve as product vector representations. They record a product’s characteristics, features, and any other semantic data that is accessible. These embeddings can subsequently be utilized by algorithms for vector representation-based product comparison, recommendation, and analysis.

Vector Embedding in Machine Learning

These vectors are beautiful because they can depict relationships well. Vector embeddings arrange related data points closely together, much like a dictionary would arrange words like “king” and “queen” next to one another. As a result, patterns and relationships that would have been hidden in the original, disorganized data can now be found by algorithms.

This fresh knowledge opens up a vast array of machine-learning possibilities. Here are a handful of instances:

-

Unlocking the Secrets of Text:

Embeddings allow machines to do sentiment analysis and language translation in Natural Language Processing (NLP). A system that comprehends word relationships may accurately translate a statement from English to Spanish or interpret the emotional tone of a review.

-

Recommending What You Like:

Have you ever wondered how those remarkably precise product suggestions show up on your display? Recommendation engines make use of embeddings. The algorithm can recognize users with similar likes and recommend things they might like by converting user preferences and product attributes into vectors.

-

Seeing Through Machine Eyes:

Embeddings play a crucial role in the field of image recognition by enabling machines to interpret visual input. Algorithms can recognize items or scenes in photos that are similar by translating image data into vectors. This serves as the foundation for facial recognition software and programs, which assist you in classifying and arranging your photo collection.

We can even visualize the relationships within the data thanks to vector embeddings. We may observe how various data points cluster together by projecting high-dimensional data onto a lower-dimensional space. This reveals hidden patterns and connections that may be challenging to understand through numbers alone.

Do vectors and embeddings have the same meaning?

Vectors and embeddings are synonymous when discussing vector embeddings. Every data point is represented as a vector in a high-dimensional space in numerical data representations. They are both implying this. Vectors are an array of numbers with a given dimension, and vector embeddings employ vectors to represent data points in a continuous space.

When data is expressed as vectors to capture important details, semantic connections, contextual features, or the structured representation of data acquired by machine learning or training algorithms, it is referred to as embedding.

How Embeddings Are Interpreted by Machine Learning Models

There are several specialized models available for incorporating various data formats. For instance, the Word2Vec model may be used to embed words; the MiniLM-L6-v2 model can be used to embed sentences; Whisper from OpenAI can be used to embed speech; CLIP can be used to embed images; and ImageBind can be used to embed multimodal images.

For knowledge mapping and semantic search, embeddings are quite helpful. Recommendation systems, data clustering and classification, document analysis, sentiment analysis, and information retrieval are just a few examples of the many potential applications.

Conclusion

In conclusion, vector embeddings are like the Rosetta Stone of machine translation—they help machines understand the language of data. They power machine learning by revealing significant links and streamlining data, opening the door to a world of clever and effective applications in the future.